The future of console gaming is looking much brighter with raytracing, and its ability to make light work in the same way as reallight rays do. Ray tracing is important because it significantly enhances the realism and visual quality of 3D rendering. By simulating the behavior of light and accurately calculating its interaction with objects, ray tracing creates lifelike reflections, shadows, and global illumination effects. This advanced rendering technique adds depth, realism, and cinematic appeal to visual content, making it invaluable for industries such as gaming, film, architecture, and product visualization. Up until recently, the technique was limited and not feasible for use in video games and was used mostly in the film making industry.

Rasterization was used for many decades to add photorealistic graphics to games, but it has limitations, especially concerning lighting. Even though game developers came up with clever techniques to implement various reflections, shadows, and other lighting elements, many effects that 3D ray tracing allows are unfeasible. These pre-rendered effects are always the same because they are fixed into the scenes, and even though convincing, they are not as dynamic.

3D ray tracing has come to change the way things are done by game developers. This allows the lighting effects to change as the player reacts within the scene, and the light adjusts automatically.

3D rendering has been a useful technique in creating perfect digital images and is invaluable to many industries. It needs less computing power and is faster, but cannot match the realism of ray tracing.

If you enjoy the special effects and images that you see in your favorite sci-fi movies, then you can thank ray tracing. The images produced make all the special effects look realistic and indistinguishable from those captured in real-life situations.

Video games can now achieve the same effects as those in movies and offer gamers real-time rendering of the special effects of their actions. In movie scenes, the hardware used is expensive, and it computes ray tracing by using millions of photon beams, a time-consuming exercise.

In real life, the way we see objects are determined by how light is reflected, absorbed, or refracted. The photons out of the light source are seen by the eyes and then interpreted by the brain.

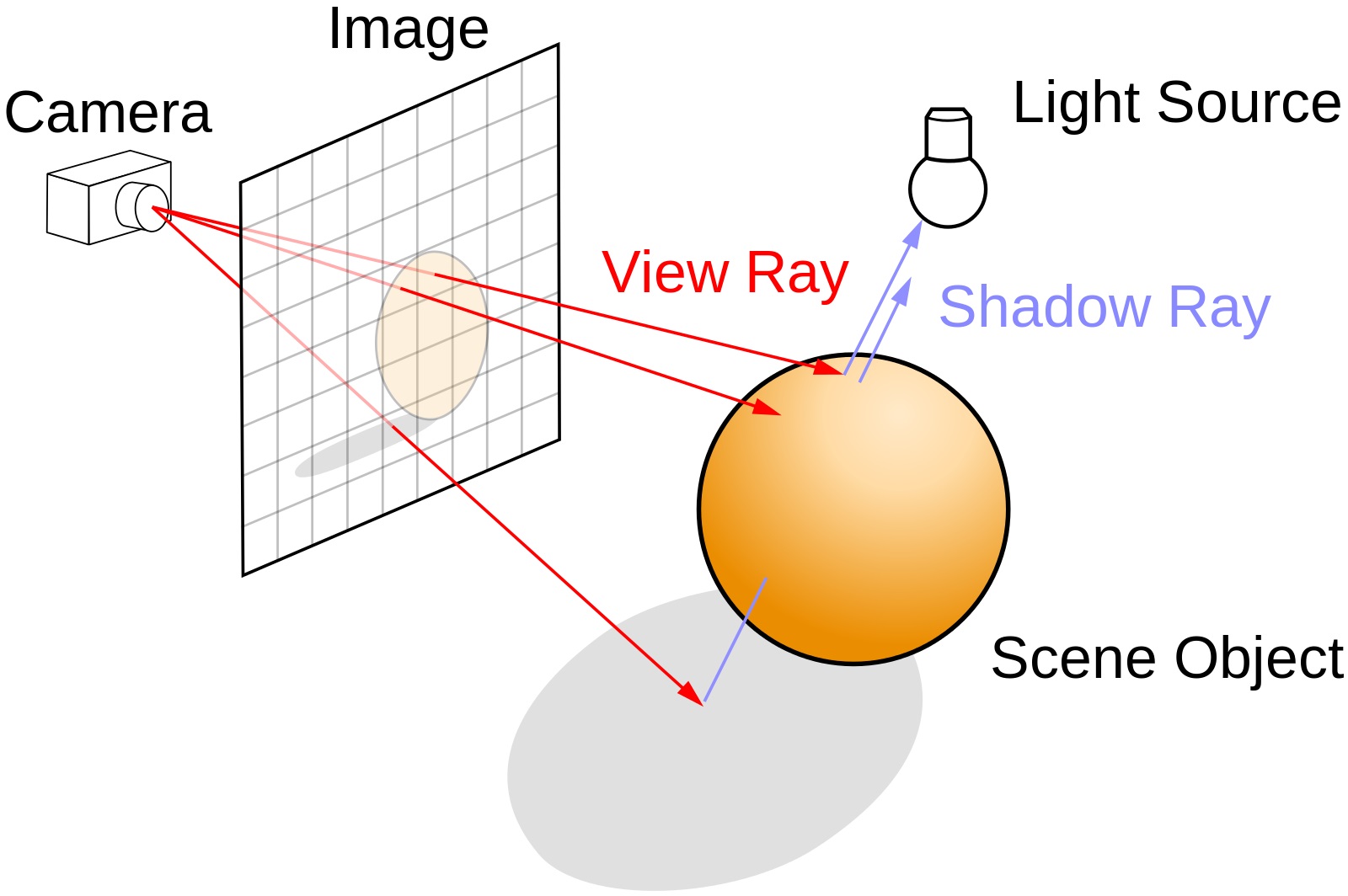

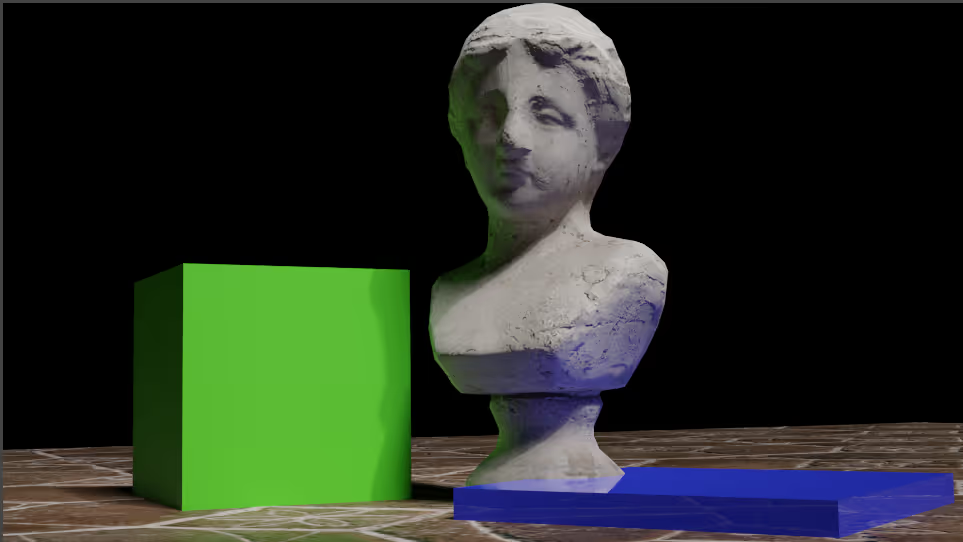

The process of ray tracing works almost in the same way but inreverse. The method generates an image by “tracing” the path oflight from an imaginary eye or camera to all the objects in it. No computing power is wasted by processing unnecessary rays and it is more efficient than tracing light source rays onto all objects in an image.

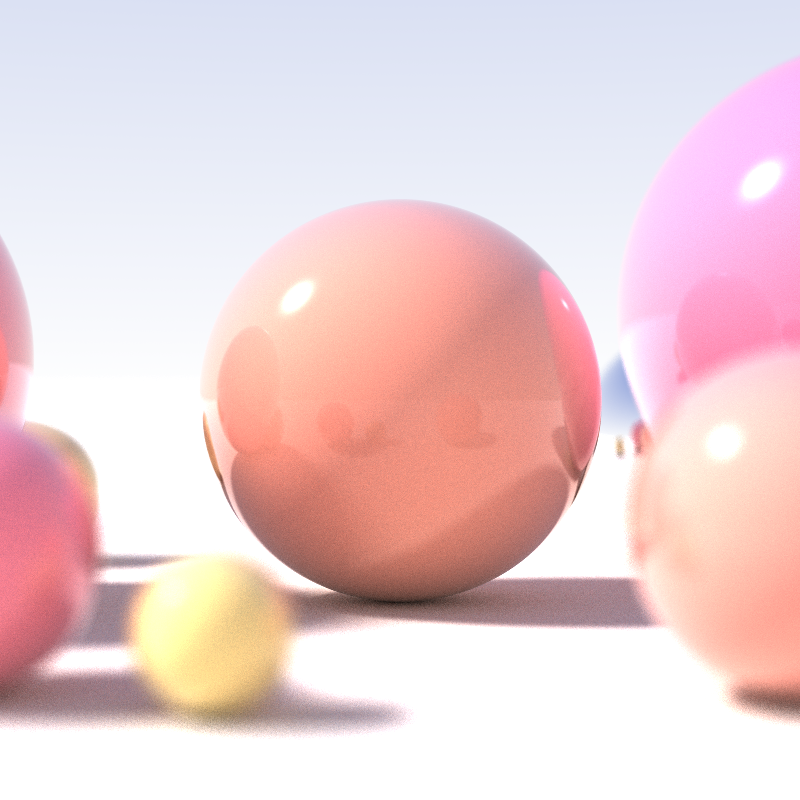

The science behind 3D ray tracing allows developers to simulate the real way that light works. Scenes are lit more realistically and even objects that are not in the frame can be reflected in the visible scene accurately.

3D ray tracing works with algorithms within the software and it begins from the camera lens. The ray-traced light moves outward and bounces off across all objects in its path. It can even take on their color and reflective properties. The software determines the appropriate light source that would affect every ray.

Because ray tracing needs intensive computation, until recently, it was impractical for the graphics of video games. This was because the computer needs to determine over 2 million pixels of the 1080p display to map something from the 3D virtual world to the monitor, that has 2D viewing.

One or more rays from the camera must be projected through each pixel, and the computer must see if the rays intersect with any triangles. In case you are not aware, computer graphics are composed of polygons and millions of triangles. The software algorithm uses data, including the color of the triangle that the ray hits, and uses its distance from the camera to determine what color the final pixel needs to be.

Also, the software measures even the rays that bounce off a triangle or travel through it. Tracing a single ray through a pixel does not produce a lifelike image and the more rays, the higher the image quality. Unfortunately, the cost also increases.

Ray tracing began to first be implemented for computer graphics in 1982, and the first images made public were presented at the Fujitsu Pavilion in Tsukuba in 1985. This was a video created on the LINKS-1 Computer Graphics system built in 1982 at Osaka University. Since then, real-time speeds of ray tracing have improved and it is now implemented in interactive 3D graphics applications. This includes the productions of demo scenes, computer and video games, and image rendering. All next-generation gaming consoles support ray tracing hardware components so that gamers can enjoy the real-time effects of the ray tracing.

Two-dimensional images for screens can be processed from a 3D model by using 3D rendering. These images are generated according to sets of data dictating color, material, and texture of the image.

Since the 1960s, rendering has been used for computer graphics when the “father of computer graphics”, Ivan Sutherland created Sketchpad, while he was at MIT. However, even before then, William Fetter used it to create a depiction of a pilot to simulate the cockpit space.

Rendering techniques have evolved since then, but the basic concept is much like that of photography. The rendering program composes a photo and uses digital lighting to create a realistic render that has all the important details.

Rendering methods differ and it can take anything from a fraction of a second to several days to render one frame.

Real-time rendering is used for interactive media and it cancalculate and display up to 120 frames per second. The software used can simulate visual effects like motion blur, lens flares, and depths.

In films and documentaries, non-real-time rendering is used, and rendering can take from a few seconds to several days, depending on the complexity of the scene. The rendered frames are initially stored in a hard drive before they are transferred to the media to be used at sequential rate frames, to give the illusion of movement.

Even though rendering quality is improving, the process is still slow. Some large companies have invested in render farms to work around this problem. However, many individual artists and designers have to make use of advanced hardware.

Rendering techniques and how they have evolved over the years.

One of the earliest techniques treats the model as a mesh of polygons. The vertices of the polygons have information embedded in them about their position, texture, and color. The vertices are then projected onto a plane normal to the camera and act like borders. The remaining pixels are filled in with color, much like first outlining an image before painting it.

This process has improved over the years with higher-resolution anti-aliasing, giving objects smoother edges, and blending them into surrounding pixels.

Rasterization issues used to cause problems with surface overlaps. This was initially solved with a Z-buffer, but ray casting has solved these problems. It casts rays onto the model from the camera’s point of view, allowing them to be drawn on the image plane. The first image it hits is shown on the render.

Ray casting could still not simulate shadows, reflections, and refractions properly and ray tracing was developed to improve on this. It depicts light better than ray casting and allows the primary rays onto the models from the camera’s point of view to produce secondary ones. The technique allows for shadow, reflection, or refraction rays to be emitted according to the surface and shadows. These can be generated onto another surface if something is hindering its surface.

The latest technique to be developed is the rendering equation and it attempts to simulate how light is emitted. It does this by considering that light is emitted by everything and not just from a light source. All sources of light are used in the algorithm creating an equation that tries to consider all possible light sources, instead of just direct illumination.

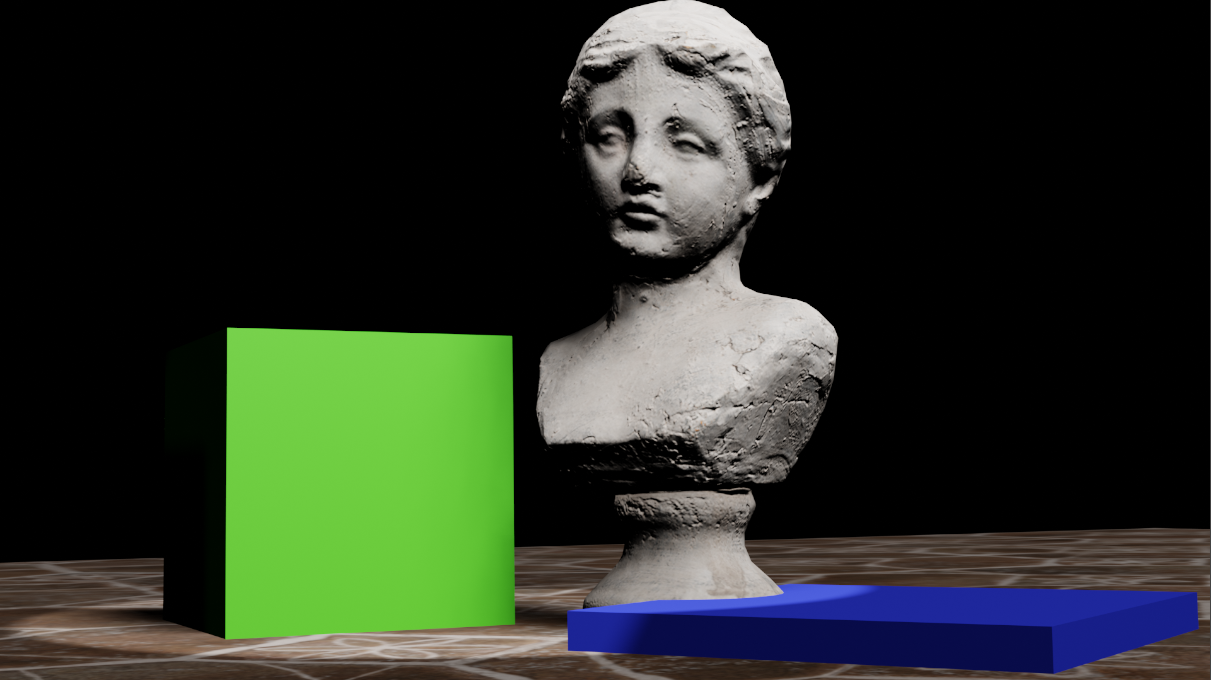

3D rendering is used in many industries and it is more cost-efficient and time-saving than prototyping. Rendered presentations, therefore, are popular now in architecture and engineering applications.

In the movie industry, 3D animation uses 3D rendering to produce animated movies with high-definition. There are almost no limits to generating a perfect shot anymore with the use of these high-definition video effects and computer-generated images.

The gaming industry has significantly benefited from photorealistic rendering and high definition images. This offers gamers a more immersive experience and game developers aim to continue making these games as realistic as possible.

The marketing industry also uses renders to make their products look more realistic in the images posted online and in catalogs. Rendering is budget efficient and their promotions become more engaging.

According to a recent report, the 3D animation market is expected to continue to grow at a Compound Annual Growth Rate (CAGR) of 11% from 2019 to 2025. In 2018, it was valued at $13.75 billion. So far, the largest share of the market using 3D technology is the media and entertainment industries, with manufacturing coming second. Architecture and construction are the third largest users of 3D technologies.

3D ray tracing generates a high degree of visual realism but is more time-intensive because it requires more compute cycles. That is why it is used in applications where time is not of the essence and the image can be rendered slowly. This is the ideal method to use when not in a hurry and extremely accurate reflections and shadows are needed. It is ideal for still images, film, and TV visual effects. In applications where speed is essential, like video games, it is still too slow.

On the other hand, 3D rendering is faster, and rendering quality is improving all the time. Rendering software uses GPU, CPU, and sometimes both to create renders, but their applications use a lot of resources and often need upgrades.

Several variables need to be taken into consideration to ensure an almost perfect render, but post-processing can help to create almost photorealistic images.

Ray tracing has begun making its appearance in software for game developers. Nvidia has integrated consumer-level GPUs into its RTX graphics cards, and Microsoft has introduced ray tracing support for Windows and Xbox One games. AMD should also be following with ray tracing integration soon, allowing more and more gamers access to more impressive games that will look closer to real-time reality to gamers.

Register Now and Get $50 FREE Credits!